注意

点击此处下载完整示例代码

StreamReader 高级用法¶

作者:Moto Hira

本教程是StreamReader 基本用法的延续。

警告

从 2.8 版本开始,我们正在重构 TorchAudio,以使其进入维护阶段。因此:

本教程中描述的 API 在 2.8 版本中已被弃用,并将在 2.9 版本中移除。

PyTorch 用于音频和视频的解码和编码功能正在被整合到 TorchCodec 中。

请参阅 https://github.com/pytorch/audio/issues/3902 获取更多信息。

本教程展示了如何将StreamReader用于

设备输入,例如麦克风、网络摄像头和屏幕录制

生成合成音频/视频

使用自定义过滤器表达式应用预处理

import torch

import torchaudio

print(torch.__version__)

print(torchaudio.__version__)

import IPython

import matplotlib.pyplot as plt

from torchaudio.io import StreamReader

base_url = "https://download.pytorch.org/torchaudio/tutorial-assets"

AUDIO_URL = f"{base_url}/Lab41-SRI-VOiCES-src-sp0307-ch127535-sg0042.wav"

VIDEO_URL = f"{base_url}/stream-api/NASAs_Most_Scientifically_Complex_Space_Observatory_Requires_Precision-MP4.mp4"

2.8.0+cu126

2.8.0

音频/视频设备输入¶

如果系统具有适当的媒体设备并且 libavdevice 配置为使用这些设备,则流式传输 API 可以从这些设备中提取媒体流。

为此,我们向构造函数传递附加参数format和option。format指定设备组件,option字典特定于指定组件。

要传递的确切参数取决于系统配置。有关详细信息,请参阅https://ffmpeg.net.cn/ffmpeg-devices.html。

以下示例说明了如何在 MacBook Pro 上执行此操作。

首先,我们需要检查可用设备。

$ ffmpeg -f avfoundation -list_devices true -i ""

[AVFoundation indev @ 0x143f04e50] AVFoundation video devices:

[AVFoundation indev @ 0x143f04e50] [0] FaceTime HD Camera

[AVFoundation indev @ 0x143f04e50] [1] Capture screen 0

[AVFoundation indev @ 0x143f04e50] AVFoundation audio devices:

[AVFoundation indev @ 0x143f04e50] [0] MacBook Pro Microphone

我们使用FaceTime HD Camera作为视频设备(索引0),使用MacBook Pro Microphone作为音频设备(索引0)。

如果我们不传递任何option,设备将使用其默认配置。解码器可能不支持该配置。

>>> StreamReader(

... src="0:0", # The first 0 means `FaceTime HD Camera`, and

... # the second 0 indicates `MacBook Pro Microphone`.

... format="avfoundation",

... )

[avfoundation @ 0x125d4fe00] Selected framerate (29.970030) is not supported by the device.

[avfoundation @ 0x125d4fe00] Supported modes:

[avfoundation @ 0x125d4fe00] 1280x720@[1.000000 30.000000]fps

[avfoundation @ 0x125d4fe00] 640x480@[1.000000 30.000000]fps

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

...

RuntimeError: Failed to open the input: 0:0

通过提供option,我们可以将设备流式传输的格式更改为解码器支持的格式。

>>> streamer = StreamReader(

... src="0:0",

... format="avfoundation",

... option={"framerate": "30", "pixel_format": "bgr0"},

... )

>>> for i in range(streamer.num_src_streams):

... print(streamer.get_src_stream_info(i))

SourceVideoStream(media_type='video', codec='rawvideo', codec_long_name='raw video', format='bgr0', bit_rate=0, width=640, height=480, frame_rate=30.0)

SourceAudioStream(media_type='audio', codec='pcm_f32le', codec_long_name='PCM 32-bit floating point little-endian', format='flt', bit_rate=3072000, sample_rate=48000.0, num_channels=2)

合成源流¶

作为设备集成的一部分,ffmpeg 提供了一个“虚拟设备”接口。此接口使用 libavfilter 提供合成音频/视频数据生成。

要使用它,我们将format=lavfi设置为src并提供过滤器描述。

有关过滤器描述的详细信息,请参阅https://ffmpeg.net.cn/ffmpeg-filters.html

音频示例¶

正弦波¶

https://ffmpeg.net.cn/ffmpeg-filters.html#sine

StreamReader(src="sine=sample_rate=8000:frequency=360", format="lavfi")

任意表达式信号¶

https://ffmpeg.net.cn/ffmpeg-filters.html#aevalsrc

# 5 Hz binaural beats on a 360 Hz carrier

StreamReader(

src=(

'aevalsrc='

'sample_rate=8000:'

'exprs=0.1*sin(2*PI*(360-5/2)*t)|0.1*sin(2*PI*(360+5/2)*t)'

),

format='lavfi',

)

噪声¶

https://ffmpeg.net.cn/ffmpeg-filters.html#anoisesrc

StreamReader(src="anoisesrc=color=pink:sample_rate=8000:amplitude=0.5", format="lavfi")

视频示例¶

元胞自动机¶

https://ffmpeg.net.cn/ffmpeg-filters.html#cellauto

StreamReader(src=f"cellauto", format="lavfi")

曼德尔布罗特集合¶

https://ffmpeg.net.cn/ffmpeg-filters.html#cellauto

StreamReader(src=f"mandelbrot", format="lavfi")

MPlayer 测试模式¶

https://ffmpeg.net.cn/ffmpeg-filters.html#mptestsrc

StreamReader(src=f"mptestsrc", format="lavfi")

约翰·康威的生命游戏¶

https://ffmpeg.net.cn/ffmpeg-filters.html#life

StreamReader(src=f"life", format="lavfi")

谢尔宾斯基地毯/三角形分形¶

https://ffmpeg.net.cn/ffmpeg-filters.html#sierpinski

StreamReader(src=f"sierpinski", format="lavfi")

自定义过滤器¶

定义输出流时,可以使用add_audio_stream()和add_video_stream()方法。

这些方法接受filter_desc参数,它是一个根据 ffmpeg 的过滤器表达式格式化的字符串。

add_basic_(audio|video)_stream和add_(audio|video)_stream之间的区别在于,add_basic_(audio|video)_stream构造过滤器表达式并将其传递给相同的底层实现。add_basic_(audio|video)_stream可以实现的所有功能都可以通过add_(audio|video)_stream实现。

注意

应用自定义过滤器时,客户端代码必须将音频/视频流转换为torchaudio可以转换为张量格式的格式之一。例如,可以通过将

format=pix_fmts=rgb24应用于视频流并将aformat=sample_fmts=fltp应用于音频流来实现。每个输出流都有单独的过滤器图。因此,不可能对过滤器表达式使用不同的输入/输出流。但是,可以将一个输入流拆分为多个流,然后再合并它们。

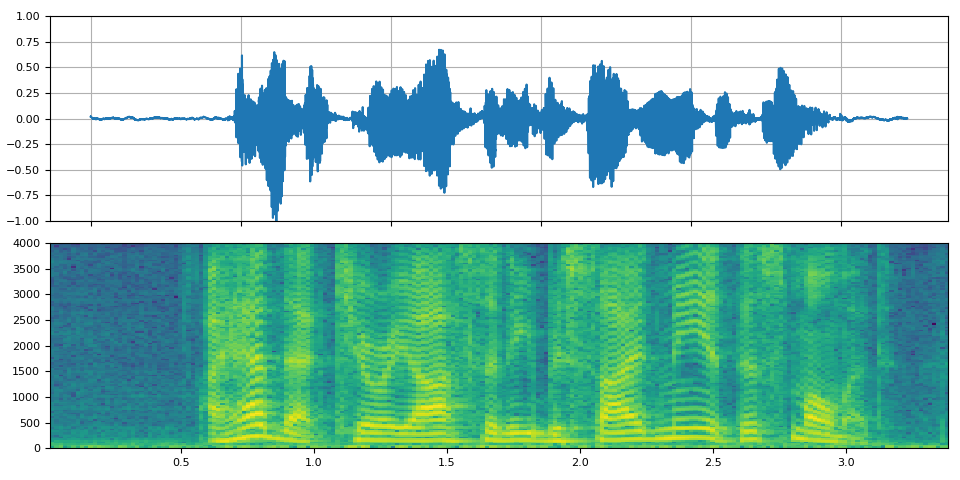

音频示例¶

# fmt: off

descs = [

# No filtering

"anull",

# Apply a highpass filter then a lowpass filter

"highpass=f=200,lowpass=f=1000",

# Manipulate spectrogram

(

"afftfilt="

"real='hypot(re,im)*sin(0)':"

"imag='hypot(re,im)*cos(0)':"

"win_size=512:"

"overlap=0.75"

),

# Manipulate spectrogram

(

"afftfilt="

"real='hypot(re,im)*cos((random(0)*2-1)*2*3.14)':"

"imag='hypot(re,im)*sin((random(1)*2-1)*2*3.14)':"

"win_size=128:"

"overlap=0.8"

),

]

# fmt: on

sample_rate = 8000

streamer = StreamReader(AUDIO_URL)

for desc in descs:

streamer.add_audio_stream(

frames_per_chunk=40000,

filter_desc=f"aresample={sample_rate},{desc},aformat=sample_fmts=fltp",

)

chunks = next(streamer.stream())

def _display(i):

print("filter_desc:", streamer.get_out_stream_info(i).filter_description)

fig, axs = plt.subplots(2, 1)

waveform = chunks[i][:, 0]

axs[0].plot(waveform)

axs[0].grid(True)

axs[0].set_ylim([-1, 1])

plt.setp(axs[0].get_xticklabels(), visible=False)

axs[1].specgram(waveform, Fs=sample_rate)

fig.tight_layout()

return IPython.display.Audio(chunks[i].T, rate=sample_rate)

/pytorch/audio/examples/tutorials/streamreader_advanced_tutorial.py:356: UserWarning: torio.io._streaming_media_decoder.StreamingMediaDecoder has been deprecated. This deprecation is part of a large refactoring effort to transition TorchAudio into a maintenance phase. The decoding and encoding capabilities of PyTorch for both audio and video are being consolidated into TorchCodec. Please see https://github.com/pytorch/audio/issues/3902 for more information. It will be removed from the 2.9 release.

streamer = StreamReader(AUDIO_URL)

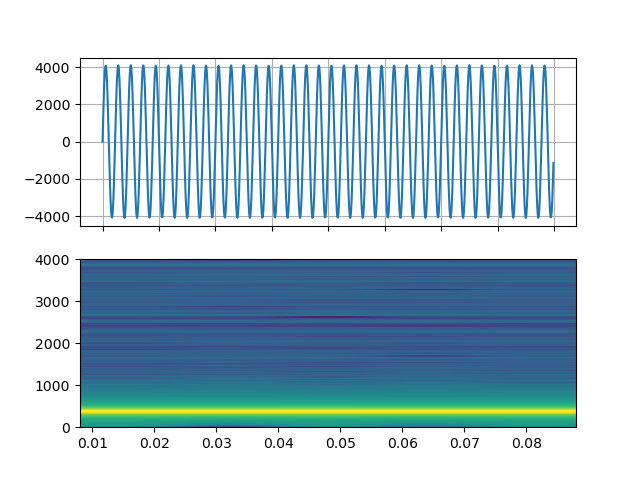

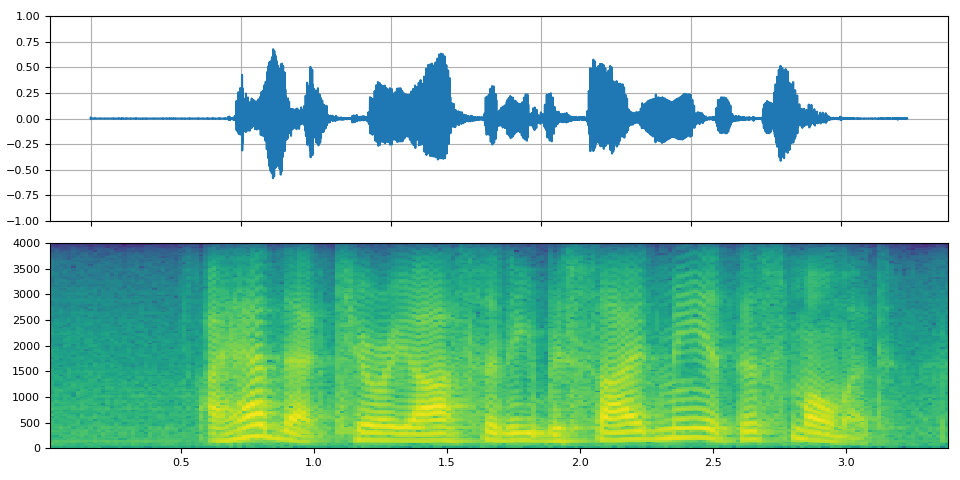

原始¶

_display(0)

filter_desc: aresample=8000,anull,aformat=sample_fmts=fltp

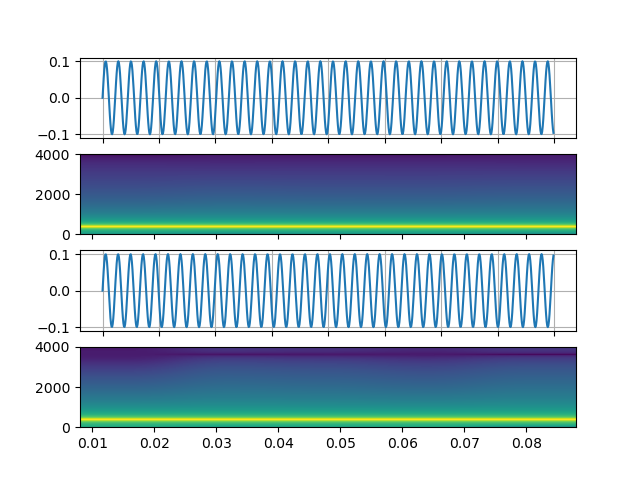

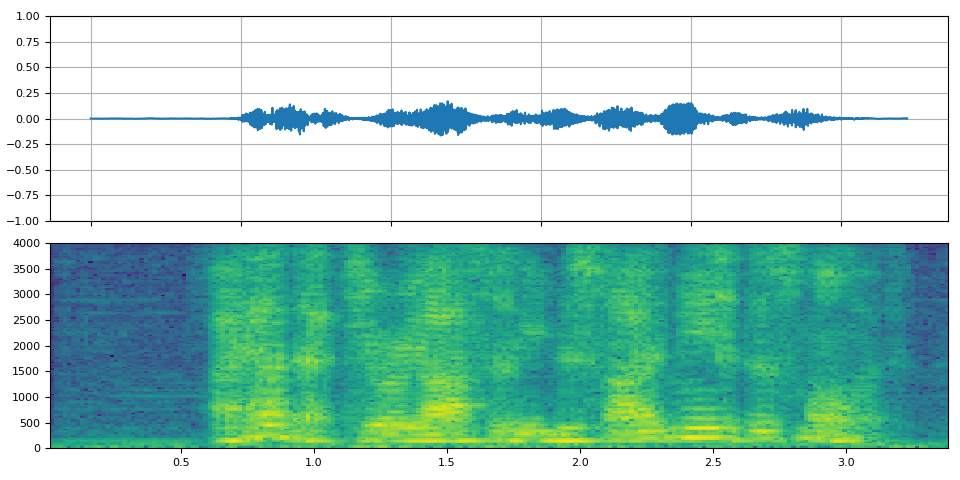

高通/低通滤波器¶

_display(1)

filter_desc: aresample=8000,highpass=f=200,lowpass=f=1000,aformat=sample_fmts=fltp

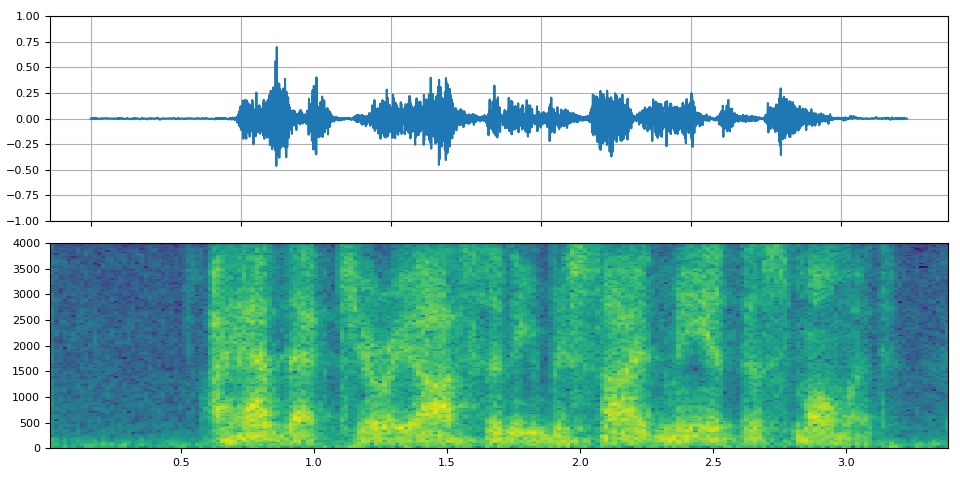

FFT 滤波器 - 机器人 🤖¶

_display(2)

filter_desc: aresample=8000,afftfilt=real='hypot(re,im)*sin(0)':imag='hypot(re,im)*cos(0)':win_size=512:overlap=0.75,aformat=sample_fmts=fltp

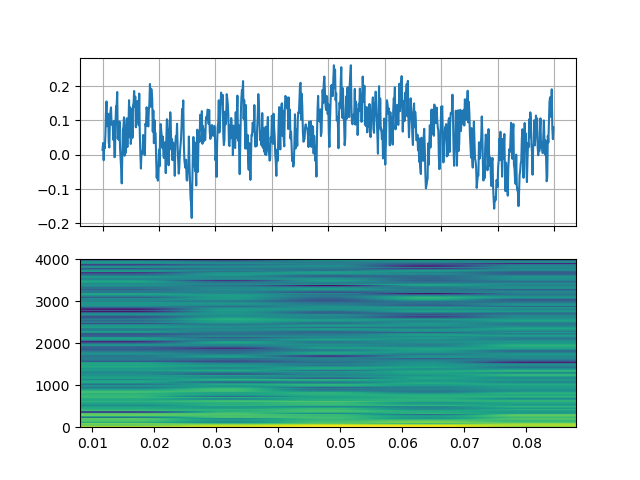

FFT 滤波器 - 耳语¶

_display(3)

filter_desc: aresample=8000,afftfilt=real='hypot(re,im)*cos((random(0)*2-1)*2*3.14)':imag='hypot(re,im)*sin((random(1)*2-1)*2*3.14)':win_size=128:overlap=0.8,aformat=sample_fmts=fltp

视频示例¶

# fmt: off

descs = [

# No effect

"null",

# Split the input stream and apply horizontal flip to the right half.

(

"split [main][tmp];"

"[tmp] crop=iw/2:ih:0:0, hflip [flip];"

"[main][flip] overlay=W/2:0"

),

# Edge detection

"edgedetect=mode=canny",

# Rotate image by randomly and fill the background with brown

"rotate=angle=-random(1)*PI:fillcolor=brown",

# Manipulate pixel values based on the coordinate

"geq=r='X/W*r(X,Y)':g='(1-X/W)*g(X,Y)':b='(H-Y)/H*b(X,Y)'"

]

# fmt: on

streamer = StreamReader(VIDEO_URL)

for desc in descs:

streamer.add_video_stream(

frames_per_chunk=30,

filter_desc=f"fps=10,{desc},format=pix_fmts=rgb24",

)

streamer.seek(12)

chunks = next(streamer.stream())

def _display(i):

print("filter_desc:", streamer.get_out_stream_info(i).filter_description)

_, axs = plt.subplots(1, 3, figsize=(8, 1.9))

chunk = chunks[i]

for j in range(3):

axs[j].imshow(chunk[10 * j + 1].permute(1, 2, 0))

axs[j].set_axis_off()

plt.tight_layout()

/pytorch/audio/examples/tutorials/streamreader_advanced_tutorial.py:434: UserWarning: torio.io._streaming_media_decoder.StreamingMediaDecoder has been deprecated. This deprecation is part of a large refactoring effort to transition TorchAudio into a maintenance phase. The decoding and encoding capabilities of PyTorch for both audio and video are being consolidated into TorchCodec. Please see https://github.com/pytorch/audio/issues/3902 for more information. It will be removed from the 2.9 release.

streamer = StreamReader(VIDEO_URL)

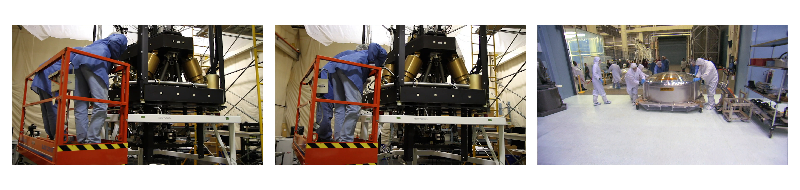

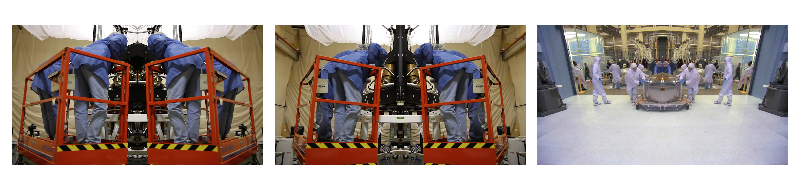

原始¶

_display(0)

filter_desc: fps=10,null,format=pix_fmts=rgb24

镜像¶

_display(1)

filter_desc: fps=10,split [main][tmp];[tmp] crop=iw/2:ih:0:0, hflip [flip];[main][flip] overlay=W/2:0,format=pix_fmts=rgb24

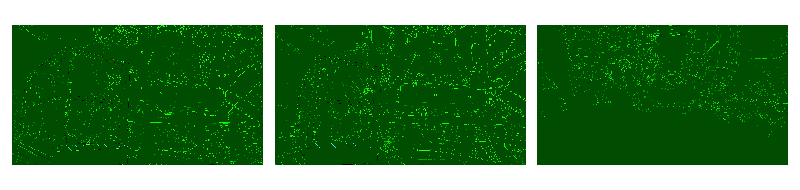

边缘检测¶

_display(2)

filter_desc: fps=10,edgedetect=mode=canny,format=pix_fmts=rgb24

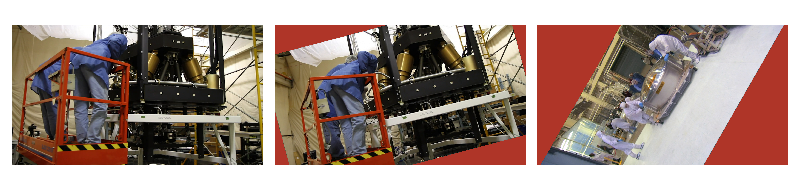

随机旋转¶

_display(3)

filter_desc: fps=10,rotate=angle=-random(1)*PI:fillcolor=brown,format=pix_fmts=rgb24

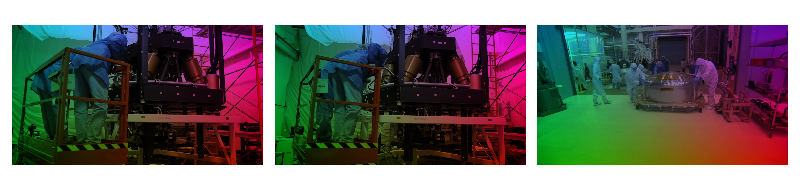

像素操作¶

_display(4)

filter_desc: fps=10,geq=r='X/W*r(X,Y)':g='(1-X/W)*g(X,Y)':b='(H-Y)/H*b(X,Y)',format=pix_fmts=rgb24

脚本总运行时间: ( 0 分 19.054 秒)