RReLU#

- class torch.nn.RReLU(lower=0.125, upper=0.3333333333333333, inplace=False)[源代码]#

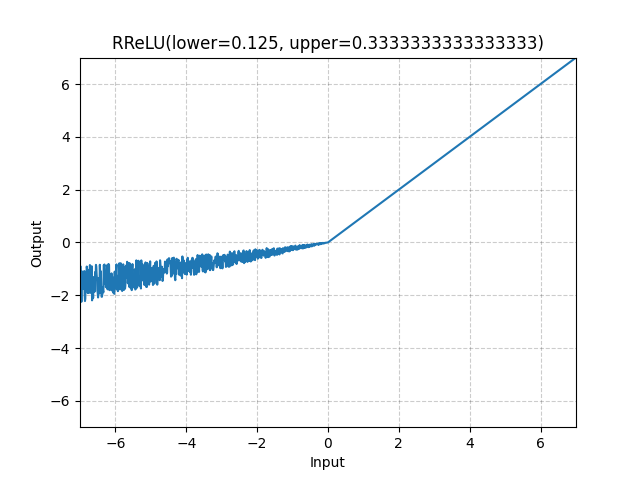

逐元素应用随机的 Leaky Rectified Linear Unit 函数。

论文中描述的方法:用于卷积网络的激活函数经验评估。

该函数定义为

其中 在训练期间从均匀分布 中随机采样,而在评估期间 固定为 .

- 参数

- 形状

输入: ,其中 表示任意数量的维度。

输出: ,形状与输入相同。

示例

>>> m = nn.RReLU(0.1, 0.3) >>> input = torch.randn(2) >>> output = m(input)